Updated: April 2026 | Reading time: 18 min

Introduction

Modern infrastructure does not generate logs from one place. Kubernetes pods, API services, load balancers, databases, serverless functions, CI/CD pipelines, cloud-managed services, and edge devices all produce log data continuously. That data has real value: it is how engineers investigate incidents, track application behavior, audit access, and meet compliance requirements. But raw logs scattered across dozens of sources are not useful until they are collected and centralized. That is the job of log aggregation tools.

Log aggregation tools pull log data from wherever it originates, normalize and filter it in transit, and route it to wherever it needs to go for analysis, storage, or alerting. Without reliable log aggregation software, the downstream analytics layer has incomplete or inconsistent data to work with.

This guide compares the 10 best log aggregation tools in 2026, explains the important distinction between log aggregation and log management, and helps you choose the right option based on your scale, architecture, and operational overhead tolerance.

One honest note before the comparison starts: this is a deliberately mixed list. Some tools here are purpose-built edge collectors. Others are routing pipelines. Some are full backends that accept log data directly. The list is structured this way because teams evaluate them in the same conversation, and choosing between them requires understanding what layer of the problem each one solves.

What are log aggregation tools?

Log aggregation tools are software components that collect log data from multiple sources, apply lightweight processing in transit, and deliver that data to a destination for storage or analysis. Their primary purpose is centralization: turning a fragmented flow of log output across many services and hosts into a single, consistent stream that can be queried and acted upon.

Log aggregation tools work at the collection and routing layer. They do not, in general, provide long-term durable storage, a searchable query interface, retention management, alerting, or dashboards. Those responsibilities fall to the log management layer downstream.

Why does log aggregation matter beyond simple forwarding?

Because infrastructure teams typically need log data to arrive reliably (with buffering and retry), quickly (with low added latency), efficiently (with compression and batching), and cleanly (with consistent field structure). A raw log stream forwarded directly from application to backend often arrives inconsistently formatted, unbuffered, and without the Kubernetes or infrastructure metadata needed for useful filtering. Log aggregation software fills that gap.

Log aggregation vs. log management

The distinction between log aggregation and log management is one of the most useful things to understand before evaluating tools in this category, and most comparison articles skip it entirely.

Log aggregation covers the collection and routing phase. It is a transport and transformation function. Log aggregation tools receive raw data from sources, apply processing rules, and deliver it to a destination. They are not responsible for storing data long-term or providing a query interface. They complete their job when the data arrives at the backend.

Tools that fit this description: Fluent Bit, Fluentd, Vector, Logstash, Filebeat, the OpenTelemetry Collector, Cribl Stream.

Log management covers the broader lifecycle of log data after it arrives. Log management platforms store log data durably, index it for search, expose a query interface for investigation, support alerting rules, enforce retention policies, and provide the access controls and audit trails that compliance workflows require. Engineers use log management tools when debugging incidents, not when configuring pipelines.

Tools that fit this description: Parseable, Elasticsearch, Splunk, Datadog, Grafana Loki, Sumo Logic, New Relic.

Most production systems need both. The aggregation layer collects and normalizes; the management layer stores and answers questions. The challenge is that stitching these layers together adds operational complexity. Each tool in the pipeline has its own configuration language, failure modes, scaling characteristics, and upgrade lifecycle. When something breaks during an incident, you need to determine which layer failed before you can fix anything.

The trend in 2026 is toward architectures that reduce this gap. Teams running Fluent Bit directly into Parseable or the OpenTelemetry Collector directly into a backend that accepts OTLP are collapsing what used to be a five-tool pipeline into two. That simplification is real, and it is one of the reasons Parseable leads this list.

What to look for in the best log aggregation tools

Choosing the right log aggregation software involves more than comparing feature lists. These are the dimensions that matter most in practice:

-

Source and agent support: Your aggregation tool needs to collect logs from every source in your environment. Check native support for Kubernetes DaemonSet deployment, file-based log tailing, syslog, HTTP endpoints, cloud provider log services, and OpenTelemetry-instrumented applications. Gaps here mean maintaining additional collectors for specific sources.

-

Parsing, filtering, and enrichment: The ability to parse unstructured log lines into structured fields, drop high-volume noise events before they consume storage, and enrich records with metadata at collection time directly affects the quality and cost of what lands in the backend. Tools with strong in-flight processing reduce the work the storage layer has to do.

-

Real-time routing and live tailing: Some use cases require logs to reach a backend with minimal latency. Incident response workflows benefit from near-real-time routing. Check whether the tool introduces meaningful lag and whether it supports sending the same stream to multiple destinations simultaneously.

-

Storage and retention flexibility: Not every log source should go to the same backend. Good log aggregation software supports routing different log types to different destinations: high-value application logs to a queryable backend, compliance-required logs to long-term archive, high-volume debug logs to cheaper storage. Routing flexibility reduces backend costs and simplifies retention management.

-

Correlation with metrics and traces: Modern incident investigation crosses signal types. If your team regularly moves from a metric alert to related logs to distributed traces, consider whether your aggregation tool is part of a pipeline designed to carry all signal types or only handles logs in isolation.

-

Cost and operational overhead: Every component in a logging pipeline adds operational cost: configuration management, version compatibility, tuning for backpressure, monitoring for dropped events, and on-call responsibility when it fails. Lightweight, low-resource tools reduce this tax. Tools that eliminate pipeline layers entirely reduce it further.

10 best log aggregation tools in 2026 at a glance

| Tool | Best For | Type | Open Source | Deployment | Starting Price | Key Strength |

|---|---|---|---|---|---|---|

| Parseable | Simplifying aggregation and analytics in fewer layers | Observability platform with direct ingestion | Yes (AGPL-3.0) | Cloud + self-hosted | $0.37/GB (Cloud); free self-hosted | S3-native storage, SQL queries, full MELT |

| Fluent Bit | Lightweight edge collection in Kubernetes | Edge collector | Yes (Apache 2.0) | DaemonSet / binary | Free | Sub-30 MB RAM, Kubernetes-native |

| OpenTelemetry Collector | Vendor-neutral multi-signal pipelines | Aggregation pipeline | Yes (Apache 2.0) | Binary / sidecar / DaemonSet | Free | Handles logs, metrics, traces in one agent |

| Vector | High-throughput pipelines with type-safe transforms | High-performance pipeline | Yes (MPL 2.0) | Binary / DaemonSet | Free | Rust performance, VRL transform language |

| Fluentd | General-purpose routing with broad plugin support | Routing aggregator | Yes (Apache 2.0) | Container / binary | Free | 500-plus plugins, tag-based routing |

| Logstash | Complex ETL transforms in Elastic-centric pipelines | ETL pipeline | Partial (SSPL) | Container / binary | Free | Grok parsing, rich filter library |

| Cribl Stream | Enterprise routing, volume reduction, multi-destination | Enterprise pipeline platform | No (free tier to 1 TB/day) | Cloud + self-hosted | Free up to 1 TB/day | Data reduction, visual pipeline builder |

| Filebeat | Lightweight file shipping into Elastic workflows | Log file shipper | Partial (Elastic License) | Binary / DaemonSet | Free | Simple setup, Elastic module ecosystem |

| Datadog | Log aggregation inside a complete observability suite | Full observability platform | No | SaaS only | $0.10/GB ingestion + indexing fees | Breadth, integrations, trace-to-log correlation |

| Grafana Loki | Open-source log backend for Grafana-native teams | Log storage backend | Yes (AGPL-3.0) | Cloud + self-hosted | Free (OSS); ~$0.50/GB Grafana Cloud | Object storage backend, Grafana integration |

Detailed review of the best log aggregation tools

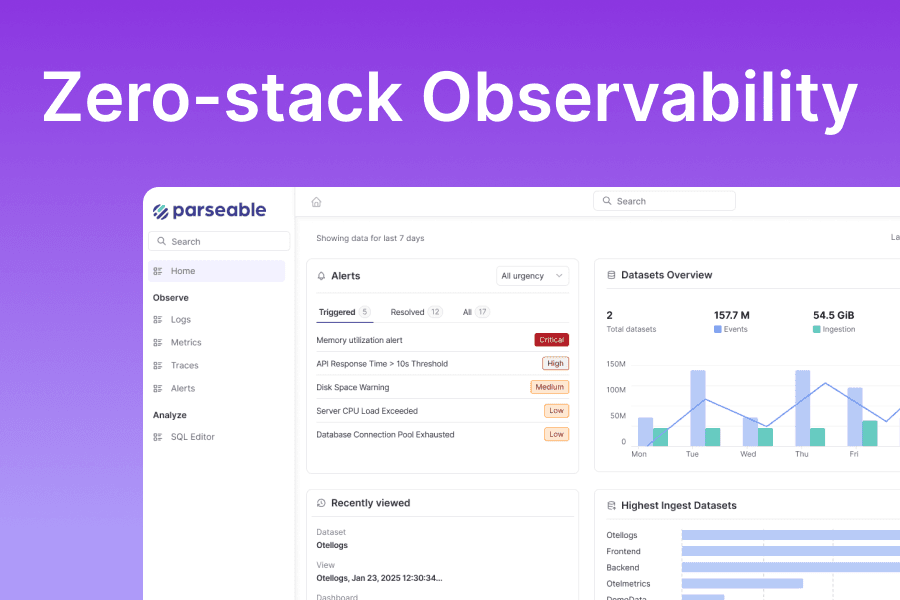

1. Parseable: Best modern platform for simplifying log aggregation and analysis

Parseable occupies a different position on this list than the pure edge collectors below it. It is not a lightweight forwarder that sits on every node collecting container logs. It is the destination that eliminates the need for the complex middle layers between collection and analysis.

The conventional logging pipeline looks like this: application logs go to Fluent Bit, which routes them to Kafka, which feeds Logstash, which writes to Elasticsearch, which Kibana queries. That is five tools, five configuration surfaces, five failure modes, and a substantial operational burden before anyone can search a log line. Parseable collapses that architecture.

With Parseable, the pipeline becomes: Fluent Bit or the OpenTelemetry Collector ships logs directly to Parseable's ingestion endpoint, and Parseable handles storage, indexing, querying, and alerting from that point forward. There is no intermediate queue, no ETL layer, no separate search backend. Parseable Cloud makes this even simpler: provision an endpoint, configure your collector, and start querying in minutes.

This matters for teams evaluating log aggregation tools because the aggregation layer does not exist in isolation. The best tool for aggregation is often the one whose destination requires the least overhead.

Why Parseable stands out

-

S3-native storage eliminates the indexing tax: Parseable stores all telemetry on S3-compatible object storage in Apache Parquet format. Storage costs run at S3 rates ($0.023/GB/month) rather than at the per-GB premium that indexed backends charge. Parquet's columnar structure enables fast analytical queries without a separate indexing layer, because predicate pushdown in the query engine skips irrelevant row groups at read time.

-

SQL querying with no proprietary syntax: Parseable uses standard SQL via Apache Arrow DataFusion. Every engineer already knows SQL. There are no query languages to learn, no SPL certifications, no LogQL syntax to remember at 3 AM. Natural language querying via LLM integration is also available for teams that want to ask questions in plain English during incident response.

-

Full MELT observability, not just logs: Parseable accepts logs, metrics, and traces through the same native OTLP endpoint. Teams that route all telemetry through a single OTel Collector can point it at one Parseable endpoint and get unified observability without maintaining separate Prometheus, Jaeger, and log backends. Cross-signal correlation uses SQL JOINs at the data layer, not UI-level linking.

-

No label cardinality limits: Because every field is a Parquet column rather than an index label, high-cardinality fields like

user_id,trace_id,pod_name, andrequest_idare all first-class queryable columns. There are no cardinality ceilings that degrade performance or produce write failures. -

Open data format: All data is stored in Apache Parquet on your S3 bucket. It is readable by DuckDB, Spark, Athena, or any Parquet-compatible tool independent of Parseable. You retain control of your data at the storage layer, which matters for data residency requirements and long-term portability.

-

AI-native analysis: Parseable integrates with Claude and other LLMs for natural language log queries. Engineers can describe what they are investigating and receive SQL generated automatically, which reduces reliance on syntax recall during time-sensitive incidents.

Pricing

Parseable Cloud: Starts at $0.37/GB ingested with a $29/month minimum. Free tier available. No separate indexing fees. No per-host charges.

Self-hosted: Free and open source under AGPL-3.0. You pay only for your S3 bucket and compute. At 100 GB/day with 30-day retention, expect $150 to $350/month total.

- Enterprise Plan We also offer enterpise plan which allows you to BYOC, offers unlimited retention, Iceberg support and much more. Book a discovery call.

Pros

- Collapses multiple pipeline layers into a direct aggregation-to-analytics path

- SQL querying with no proprietary syntax and zero onboarding friction

- Full MELT observability in a single platform: logs, metrics, and traces

- S3-native storage at commodity object store costs

- Open Parquet data format with no storage-layer vendor lock-in

- Long retention at flat S3 economics

- Native OTLP ingestion and compatibility with all major collectors

- AI-native natural language querying

Best-fit note

Parseable is not a standalone edge collector. It works best as the destination in a pipeline where Fluent Bit, the OpenTelemetry Collector, or Vector handles collection from nodes and containers. Teams that need a pure edge-only collector with no backend requirements should look at Fluent Bit or the OTel Collector on their own. Teams that want to minimize the total number of moving parts between collection and analysis will find Parseable the strongest modern option.

See the cost and complexity difference for yourself. Start with Parseable Cloud free and connect your first log stream in under a minute.

2. Fluent Bit: Best lightweight edge log aggregation tool

Fluent Bit is the go-to lightweight log aggregation tool for resource-constrained environments. Written in C with minimal external dependencies, it runs as a DaemonSet on every Kubernetes node with a memory footprint between 10 and 30 MB in typical production deployments. For teams that need log collection at the edge without inflating per-node resource usage, Fluent Bit sets the standard.

Fluent Bit has grown substantially beyond its origins as a simple forwarder. It includes a stream processing engine for filter and transformation logic, native Kubernetes metadata enrichment, and a plugin ecosystem that covers most common input sources and output destinations.

Key features

- Extremely low resource footprint: 10 to 30 MB RAM in typical Kubernetes deployments

- Written in C with no external runtime dependencies

- Built-in Kubernetes metadata enrichment (namespace, pod name, labels, annotations)

- Stream processing engine for filtering and lightweight transformation

- Input plugins for tail, syslog, systemd journal, TCP, HTTP, and more

- Output plugins covering Parseable, Elasticsearch, Splunk HEC, S3, Kafka, and others

- Native Prometheus metrics endpoint for self-monitoring

Pricing

Free and open source under the Apache 2.0 license. Calyptia (now part of Chronosphere) offers commercial support and Fluent Bit Enterprise with additional features.

Pros

- Lowest resource overhead of any production-grade log aggregation tool

- Excellent Kubernetes integration with DaemonSet patterns and metadata enrichment

- Broad plugin support for both inputs and outputs

- Apache 2.0 license with no usage restrictions

Cons

- Transformation capabilities are more limited than Vector or Logstash for complex logic

- Lua-based scripting for custom filters is functional but restricted compared to full programming environments

- Debugging pipeline issues can be difficult: silent drops and misconfigurations are hard to trace

- Plugin quality varies; community plugins may be unmaintained

3. OpenTelemetry Collector: Best vendor-neutral log aggregation tool

The OpenTelemetry Collector is the CNCF standard for vendor-neutral telemetry collection and routing. It handles logs, metrics, and traces in a single agent, which makes it the most practically useful aggregation tool for teams standardizing on OpenTelemetry. Rather than running separate agents for each signal type, the OTel Collector provides a single configuration surface for the entire telemetry pipeline.

Its architecture is clean and composable: receivers accept data from sources (OTLP, filelog, hostmetrics, k8s events, and dozens more), processors apply transformations and filtering, and exporters deliver data to destinations. The Contrib distribution adds hundreds of community components. Because the output format is OTLP, the backend is trivially swappable without touching application instrumentation.

Key features

- Handles logs, metrics, and traces in a unified pipeline with a single agent

- Vendor-neutral: OTLP output means any backend can receive data without format adapters

- Receivers cover OTLP, filelog, hostmetrics, Kubernetes event collection, and 100-plus others

- Processors include batching, memory limiting, attribute transformation, and sampling

- Contrib distribution with 300-plus community components

- Builder tool for creating custom minimal distributions with only needed components

- CNCF project with broad industry adoption and active development

Pricing

Free and open source under the Apache 2.0 license. Fully vendor-neutral with no commercial requirements.

Pros

- Single agent for logs, metrics, and traces reduces per-node overhead

- Vendor-neutral OTLP output prevents lock-in at the collection layer

- Massive community and CNCF backing with frequent releases

- Clean receiver/processor/exporter architecture that is easy to reason about

- Excellent choice for teams standardizing on OpenTelemetry from the start

Cons

- File-based log collection (filelog receiver) is less battle-tested than Fluent Bit for edge cases

- The Contrib distribution is large; most deployments should build a custom distribution

- YAML configuration becomes verbose for complex pipelines

- Requires careful component selection to avoid binary bloat and unnecessary attack surface

Bring your logs from all source. Aggregate, manage and analyze them all in one place for free. Get Started with Parseable.

4. Vector

Vector is a high-performance observability data pipeline built in Rust by Timber (acquired by Datadog). It handles logs, metrics, and traces with consistent performance that benchmarks 5 to 10 times faster than Java or Ruby-based alternatives. Its Vector Remap Language (VRL) is a type-safe, purpose-built transformation language with compile-time error checking that catches pipeline logic problems at configuration time rather than at 3 AM.

Vector's design philosophy emphasizes correctness and performance: end-to-end acknowledgements prevent data loss, VRL prevents ambiguous transformation logic, and the Rust runtime eliminates garbage collection pauses that affect Go and Java alternatives under heavy load.

Key features

- Built in Rust with consistently high ingestion throughput and low resource consumption

- VRL (Vector Remap Language): a type-safe transformation language with compile-time validation

- End-to-end acknowledgements for at-least-once delivery guarantees

- Handles logs, metrics, and traces in a single pipeline

- Unit testing framework built into the configuration format

- Sources cover Kubernetes, Docker, file tailing, syslog, HTTP, Kafka, and more

- Sinks include Parseable, Elasticsearch, S3, Datadog, and most common backends

Pricing

Free and open source under the MPL 2.0 license. VectorDB and enterprise support are available through Datadog.

Pros

- Best raw performance per CPU and memory unit of any aggregation tool

- VRL provides compile-time safety for transformation logic, reducing runtime failures

- End-to-end acknowledgement model prevents data loss during backpressure

- Active development with strong documentation and a supportive community

Cons

- Datadog ownership creates strategic uncertainty for teams specifically avoiding the Datadog ecosystem

- VRL is another proprietary language to learn, though it is more approachable than Logstash's DSL

- The plugin ecosystem is not extensible by design; if a native source or sink is missing, options are limited

- Higher resource usage than Fluent Bit for edge deployments on resource-constrained nodes

5. Fluentd: Best general-purpose log routing tool

Fluentd is a CNCF graduated project and one of the most widely deployed log aggregation tools in the Kubernetes ecosystem. It has operated as a unified logging layer for well over a decade, collecting logs from diverse sources and routing them to over 500 destinations via its plugin system. Fluentd's strength is breadth: if a log source or destination exists, there is almost certainly a Fluentd plugin for it.

Fluentd is most commonly deployed as a centralized aggregator that receives data from lightweight edge collectors (typically Fluent Bit) and applies richer transformations before routing to one or more backends. That two-tier architecture (Fluent Bit on the edge, Fluentd in the middle) is a common production pattern that balances resource efficiency with processing flexibility.

Key features

- Unified logging layer with a plugin architecture covering 500-plus input, output, and filter plugins

- Built-in buffering and retry logic for reliable delivery

- Tag-based routing that allows flexible log pipeline configuration

- JSON-structured logging throughout the pipeline

- Deep integration with Kubernetes through Helm charts and operator patterns

Pricing

Free and open source under the Apache 2.0 license. Calyptia offers commercial Fluentd support.

Pros

- Broadest plugin ecosystem of any aggregation tool; compatible with almost every source and destination

- Proven at large-scale Kubernetes deployments with years of production hardening

- Tag-based routing provides expressive, flexible pipeline topology

- Apache 2.0 license with no usage restrictions

Cons

- Ruby runtime consumes 300 to 500 MB of RAM per instance, significantly higher than Fluent Bit

- Ruby's Global Interpreter Lock (GIL) limits per-process concurrency at high throughput

- Configuration syntax has a steep learning curve with custom

<source>/<match>/<filter>DSL - Plugin quality varies significantly across the ecosystem; unmaintained community plugins are a risk

Avoid the log aggregator layer. Use Parseable

6. Logstash

Logstash is the "L" in the ELK stack and has been the standard ETL processor for Elasticsearch deployments for over a decade. Its filter plugin library is the most extensive of any aggregation tool: Grok pattern matching for unstructured text, GeoIP lookups, field mutations, conditional routing, and more. For teams running Elasticsearch who need sophisticated parsing logic, Logstash remains the most capable option.

For teams comparing log aggregation tools, Logstash comes with several trade-offs. Logstash runs on the JVM and is the most resource-intensive tool on this list. It requires 1 to 4 GB of heap memory per instance, takes 30 to 60 seconds to start due to JVM warm-up, and needs careful Grok pattern tuning to avoid silent parsing failures. Teams migrating away from Elasticsearch should evaluate whether they need Logstash's transformation depth or whether a lighter alternative covers the use case.

Key features

- Over 200 input, filter, and output plugins covering a wide range of sources and destinations

- Grok filter for parsing unstructured log data into structured fields

- Conditionals and complex branching logic within pipeline configuration

- Deep integration with Elasticsearch and the broader Elastic ecosystem

- Elasticsearch ingest pipelines can offload some Logstash transformations at lower cost

Pricing

Free and open source under the Elastic License (source-available) / SSPL. Self-hosting is free from a licensing standpoint. Commercial Elastic Cloud deployments include Logstash as part of the Elastic stack.

Pros

- Most powerful transformation and parsing capabilities of any aggregation tool

- Grok pattern library covers thousands of common log formats

- Persistent queues provide strong delivery guarantees

- Mature with extensive documentation and community knowledge

Cons

- JVM dependency means 1 to 4 GB heap minimum and slow startup times

- Grok patterns are difficult to debug; errors can silently drop or misparse fields

- SSPL licensing change created uncertainty and drove some teams to alternatives

- Not practical for edge collection: resource footprint prohibits DaemonSet deployment

- Falls outside typical use cases for teams moving away from Elasticsearch

7. Cribl Stream: Best enterprise log routing and telemetry pipeline control

Cribl Stream is a commercial observability pipeline platform focused on enterprise-scale data routing. Its primary value proposition is reducing log volume before data reaches expensive backends: by filtering, sampling, and aggregating at the routing layer, Cribl can reduce ingestion volume by 40 to 60 percent for teams paying per-GB at Splunk or Datadog rates. A visual pipeline builder and reprocessing capability add operational flexibility that code-only tools lack.

Cribl is positioned as a control plane between sources and destinations rather than as a first-class log analytics tool. It is most relevant for large enterprises with existing investments in Splunk or Datadog who want to reduce those platforms' ingestion costs without replacing them entirely.

Key features

- Visual drag-and-drop pipeline builder for configuration without YAML

- Data reduction capabilities: filtering, sampling, and aggregation before backend delivery

- Replay functionality to reprocess historical data through updated pipeline configurations

- Support for Splunk HEC, syslog, OTLP, and other common input formats

- Route the same data to multiple destinations simultaneously

- Role-based access control and audit logging for enterprise governance

Pricing

Free tier up to 1 TB/day for Cribl Stream. Enterprise tier with clustering, advanced RBAC, and support is available via sales with pricing not publicly listed.

Pros

- Visual pipeline builder lowers the barrier for non-YAML configuration

- Data reduction can meaningfully reduce costs at backends with per-GB pricing

- Replay capability provides a safety net for pipeline configuration changes

- Multi-destination routing in a single configuration

Cons

- Adds another proprietary tool to the pipeline rather than reducing total complexity

- Node.js runtime has higher resource usage compared to C or Rust alternatives

- Advanced features (clustering, enterprise RBAC) require paid Enterprise tier with opaque pricing

- Most valuable when optimizing expensive Splunk or Datadog deployments; less relevant for teams building on cost-efficient backends like Parseable

Get your telementry data flowing in from Cribl to Parseable Know more

8. Filebeat: Best lightweight file shipping into existing Elastic workflows

Filebeat is Elastic's lightweight log file shipper, part of the Beats family designed to tail log files and forward events to Elasticsearch or Logstash. It runs as a Go binary with a 30 to 50 MB RAM footprint, handles file rotation and resume on restart through a registry, and includes pre-built modules for common log formats including Nginx, Apache, MySQL, and Linux system logs.

Filebeat is a strong choice when the pipeline destination is Elasticsearch and log sources are file-based. Outside the Elastic ecosystem, its value is limited: the transformation capabilities are minimal, it does not support metrics or traces, and the Elastic License limits redistribution in some contexts.

Key features

- Lightweight Go binary with 30 to 50 MB RAM consumption in typical deployments

- Pre-built modules for common applications: Nginx, Apache, MySQL, Suricata, and more

- Registry-based file tracking that survives restarts without duplicate delivery

- Backpressure handling with built-in rate limiting

- Processors for lightweight field transformation before shipping

- Compatible with both Elasticsearch and Logstash as destinations

Pricing

Free and open source under the Elastic License (source-available). Available as part of the Elastic Stack or standalone.

Pros

- Extremely simple setup for file-based log collection in Elastic deployments

- Pre-built modules reduce time to first indexed log for common applications

- Lightweight resource footprint suitable for DaemonSet deployment

- File registry prevents data loss and duplicate delivery on restart

Cons

- Tightly coupled to the Elastic ecosystem: functionality outside Elasticsearch is limited

- Transformation capabilities are minimal; complex parsing requires Logstash or an Elasticsearch ingest pipeline

- Elastic License restricts some redistribution use cases

- Not suitable for non-file log sources or multi-signal telemetry pipelines

- Does not handle metrics or traces; a separate agent is needed for full observability

9. Datadog: Best if you want log aggregation inside a broader observability suite

Datadog is not a log aggregation tool in the traditional sense. It is a full observability platform that includes its own collection agent, ingestion pipeline, and backend storage in a single SaaS product. Including it here reflects how many teams evaluate it: as an alternative to building a custom aggregation pipeline and log backend separately.

For teams already using Datadog for infrastructure monitoring and APM, the Datadog Agent handles log collection directly, the Logs Pipelines handle in-platform enrichment, and trace-to-log correlation works natively without any external glue. That integration depth is the primary reason teams choose Datadog for log aggregation alongside their existing monitoring.

Key features

- Datadog Agent handles log collection, metrics, and traces in a single agent per host

- Logs Pipelines for parsing, enrichment, and routing at ingestion within the platform

- Trace-to-log correlation through shared trace and span IDs

- 750-plus integrations for cloud services, databases, and applications

- Log patterns and anomaly detection for surfacing unusual behavior

- Compliance archiving with rehydration for long-term cost-reduced retention

Pricing

Log ingestion: $0.10/GB. Log indexing: $1.70 per million events at 15-day retention. Infrastructure host monitoring and APM are billed separately on top of log costs.

At 100 GB/day, ingestion and indexing combined run approximately $5,000 to $6,000/month before compute and APM costs.

Pros

- Single-agent collection for logs, metrics, and traces with no pipeline assembly required

- Native trace-to-log correlation and strong UI for cross-signal investigation

- 750-plus integrations with no custom pipeline configuration

- No infrastructure to manage for the collection or backend layer

Cons

- Among the most expensive log management and aggregation options at scale

- SaaS only with no self-hosted option for data residency requirements

- Ingestion and indexing billed separately, making accurate cost forecasting difficult

- Proprietary agents create switching friction; migrating away requires rebuilding collection configuration

- Short default retention (15 days) before costs escalate significantly

Looking for a cost effective Datadog alternative? Try Parseable for free

10. Grafana Loki: Best open-source log backend for Grafana users

Grafana Loki is included in this list because teams evaluating cloud-native log aggregation tools frequently consider it as a combined collection plus storage solution when paired with Promtail or the Grafana Agent. It is the most widely deployed open-source log backend for Kubernetes environments, and for teams already running Prometheus and Grafana, it extends the existing investment naturally.

Loki's design is opinionated: it indexes only metadata labels, not log content, and stores compressed log chunks on object storage. This keeps storage costs low and indexing overhead minimal, but it limits full-text search and creates cardinality constraints for high-cardinality fields. Full incident workflows typically require the complete LGTM stack (Loki, Grafana, Tempo, Mimir).

Key features

- Label-indexed storage model with minimal indexing overhead

- Object storage backend (S3, GCS, Azure Blob) for cost-efficient long-term retention

- LogQL query language with pipeline syntax modeled on PromQL

- Horizontal scalability via a microservice architecture (distributor, ingester, querier, compactor)

- Deep Grafana integration for log exploration and dashboard building

- Multi-tenancy support for SaaS and enterprise deployments

- Pairs with Promtail or the Grafana Agent for Kubernetes log collection

Pricing

Open source (self-hosted): Free. Runs on your own infrastructure with object storage as the backend.

Grafana Cloud: Free tier with 50 GB of logs per month. Paid plans charge approximately $0.50/GB beyond the free tier.

Pros

- Free and open source with a large community

- Low storage costs due to object storage backend

- Excellent Grafana integration for teams already running Grafana dashboards

- Scales horizontally for large Kubernetes environments

Cons

- No full-text search: requires knowing label sets before formulating queries

- Label cardinality limits can cause ingester OOM failures and write degradation at scale

- Three separate query languages across the LGTM stack (LogQL, PromQL, TraceQL)

- Full observability requires managing four separate systems (Loki, Mimir, Tempo, Grafana)

- Proprietary chunk format creates data portability constraints compared to open Parquet storage

Which log aggregation tool should you choose?

The right choice depends on what you are aggregating, where you need it to go, and how much operational overhead you can absorb. These seven decision lenses cover the most common evaluation scenarios:

-

Start with what needs to be aggregated: Map whether your environment produces application logs, infrastructure logs, Kubernetes container logs, cloud service logs, or file-based logs, then prioritize tools with native support for those sources. A gap at the collection layer means a separate agent or custom configuration for that source, which adds fragility. Fluent Bit and the OpenTelemetry Collector cover the widest range of Kubernetes and cloud sources with the least additional configuration. Parseable also allows you to collect data from 70+ sources.

-

Check whether logs need observability context: If engineers regularly pivot from log events to metrics and distributed traces during incident response, choose an aggregation tool that fits a unified observability pipeline rather than treating logs as a standalone problem. Parseable and The OpenTelemetry Collector both support all three signal types, which allows a single pipeline to carry logs, metrics, and traces to the same backend.

-

Look at the storage and indexing model: Do not evaluate a log aggregation or backend tool only by its collection interface. Understand whether it indexes full log content (Elasticsearch), metadata labels (Loki), or columnar fields (Parseable), because that design choice directly determines cost, search behavior, and performance at scale. Label-only indexing is cheap but limits full-text investigation. Full inverted indexing is powerful but expensive. Columnar storage with predicate pushdown delivers both search flexibility and cost efficiency.

-

Validate how the tool handles cardinality: Environments with high-cardinality attributes, such as pod names, IP addresses, user IDs, and request IDs, stress label-based systems significantly. Loki's label cardinality limits are well-documented and can cause ingester failures in Kubernetes environments at scale. Parseable has no cardinality limits because every field is a Parquet column. Verify how your candidate backend handles this before you deploy at production volume.

-

Compare pricing against real ingestion patterns: Review how the tool charges for data volume, indexing, and retention, then test that model against your actual or expected daily log throughput rather than a current low-volume baseline. Per-GB pricing that looks reasonable at 10 GB/day can become the largest line item in the infrastructure budget at 500 GB/day.

-

Define retention and archive needs early: Decide how long logs need to remain searchable or recoverable for debugging, audits, and compliance requirements, then confirm whether the tool supports low-cost archival and practical rehydration for older data. Platforms with short retention defaults (Datadog's 15 days, Papertrail's tier-based search windows) can produce significantly higher costs for teams with month-scale or year-scale requirements.

-

Check deployment flexibility and lock-in risk: If data residency, compliance, or cost control requirements may drive a backend change in the future, favor tools and pipelines built around open, vendor-neutral collection paths. The OpenTelemetry Collector with OTLP output and Parseable with open Parquet on S3 is a combination that allows the backend to change with a configuration update rather than a re-instrumentation project.

Final verdict

The market for log aggregation tools is moving in a clear direction. Edge collection is getting lighter: Fluent Bit and the OpenTelemetry Collector have effectively replaced Fluentd and Logstash as the default collection agents in new Kubernetes deployments. The middle layers are getting thinner: teams that previously ran Kafka-backed pipelines are routing directly from collectors to backends. And the backends themselves are getting simpler: S3-native platforms with columnar storage are replacing indexed clusters that require dedicated operational expertise.

What this means in practice is that teams building a logging pipeline in 2026 have a better opportunity than they did five years ago to keep the architecture simple. The question is not which five tools to stitch together, but which two or three to use and whether the combination serves the full need from collection through investigation.

Parseable is the strongest modern choice for the backend half of that architecture. It accepts log data directly from Fluent Bit, Vector, and the OpenTelemetry Collector. It stores everything on S3 in open Parquet format. It queries with standard SQL. It handles logs, metrics, and traces in one platform. And it costs significantly less than indexed alternatives at scale, because the storage economics of object storage are fundamentally different from the compute-heavy cost model of traditional search clusters.

For teams that want fewer moving parts between collected logs and useful analysis, Parseable plus a lightweight edge collector is the architecture worth evaluating in 2026.

Ready to simplify your logging pipeline? Try Parseable Cloud free and start ingesting from your existing collector in minutes.

FAQ

What are log aggregation tools?

Log aggregation tools are software components that collect log data from multiple sources, process it in transit (parsing, filtering, enriching), and route it to a destination for storage or analysis. Their primary function is centralization: turning fragmented log output from many services, containers, and hosts into a consistent, queryable data stream. Log aggregation tools are responsible for the collection and routing phase of the logging pipeline. They are distinct from log management platforms, which handle the downstream storage, indexing, querying, and retention lifecycle.

What is the difference between log aggregation and log management?

Log aggregation covers collection and routing. Log aggregation software receives raw log data from sources and delivers it to a destination. It does not store data long-term, does not provide a search interface, and does not manage retention. Log management covers the full lifecycle of log data after collection: durable storage, indexing for search, query interfaces, alerting rules, retention policies, and compliance controls. Most production systems need both: a lightweight aggregation layer at the edge and a log management backend for storage and investigation. The trend in 2026 is toward collapsing these layers by routing directly from a lightweight collector into a platform like Parseable that handles both ingestion and analytics without intermediate stages.

Do I need both a log aggregation tool and a log management platform?

In most production environments, yes. A lightweight collection agent on each node or container host is the most practical way to collect logs at the source with buffering and retry. That agent then routes data to a backend that stores it durably and exposes it for query. The exception is when application teams instrument directly against an analytics platform's HTTP API or when the collection agent and backend are combined (as with the Datadog Agent + Datadog platform). The goal of reducing pipeline complexity is not to eliminate the collection layer, but to minimize the number of processing stages between the collection layer and the point where engineers can query results.

What is the best open-source log aggregation tool?

For edge collection in Kubernetes, Fluent Bit is the best open-source log aggregation tool due to its minimal resource footprint, native Kubernetes metadata enrichment, and broad plugin support. For multi-signal pipelines, the OpenTelemetry Collector is the strongest open-source choice because it handles logs, metrics, and traces in a single vendor-neutral agent. For teams that want to combine open-source collection with an open-source backend, Fluent Bit or the OTel Collector routing to Parseable (AGPL-3.0) provides a complete open-source pipeline with SQL querying and S3 storage.

What should I look for in a cloud-native log aggregation tool?

Cloud-native log aggregation tools should support native Kubernetes DaemonSet deployment, automatic Kubernetes metadata enrichment (namespace, pod, container, node labels), horizontal scalability for variable log volumes, and OTLP or HTTP output for flexible backend routing. Low resource consumption per node matters because collection agents run on every host. Native support for cloud provider log services (CloudWatch Logs, GCP Cloud Logging, Azure Monitor) reduces the number of separate collection paths needed. Vendor-neutral output formats prevent lock-in at the collection layer, which is important if the backend may change as requirements evolve.

Can Parseable replace parts of a traditional logging pipeline?

Yes. Parseable's native OTLP endpoint and direct HTTP ingestion API allow it to serve as the backend in a two-component pipeline: a lightweight collector (Fluent Bit, OTel Collector) at the edge and Parseable handling everything from ingestion onward. This eliminates the message queue (Kafka), the ETL layer (Logstash), and the separate search backend (Elasticsearch) that traditional pipelines require. Teams that move from a five-component ELK pipeline to Fluent Bit plus Parseable reduce their operational surface significantly while gaining SQL querying, unified MELT observability, and S3-native storage economics. See our ELK to Parseable migration guide for a practical walkthrough.